AI is a bureaucratic technology. So is fighting war Henry Farrell

...The "modern system" of war doesn't depend on biceps, or even materiel, so much as it does on the complex organizational structures that allow assets to be deployed successfully in ways that reinforce each other. Logistics play an incredibly important role - if you don't get stuff to roughly the right place at the right time, you are going to lose. A myriad of specific decisions taken by individuals need to cumulate properly. That all helps explain why the Pentagon has so much bureaucracy: even if it is inefficient in the specific; even if sometimes it is inefficient in the general, you can't do without it.That, in turn, helps explain why AI, including both general summarize-and-pull-information-together-and-generate systems like Claude, and more specialized systems for particular purposes are valuable to the Department of Defense. They potentially improve coordination. The "Management Singularity" is incredibly useful to large organizations that have a lot of information to manage.

...the fight between Hegseth and Anthropic, which reportedly turned in large part on the circumstances under which Anthropic's LLM could be used for “lawful surveillance of Americans.” So what kinds of surveillance are, in fact, lawful?

It is widely understood that e.g. the NSA is forbidden from deliberately conducting surveillance on US citizens, by Executive Order 12333. Past scandals (when the NSA e.g. was bugging the Reverend Martin Luther King) led to reforms in the 1970s and after. What is much less well known is that there are no strong legal controls that prevent the US military from purchasing 'open source' information that has been gathered by commercial providers.

There is an entire for-profit equivalent of the surveillance state that gathers data which it sells on to other businesses for targeted advertising and similar. And it doesn't just sell on to other businesses. Government too - including some of the military parts of government - are reportedly enthusiastic customers.

This already presents dilemmas — government can potentially use this data to develop sophisticated profiles of US citizens with existing technologies. But LLMs can potentially greatly increase the abilities of bureaucracies to weave together different sources of data to provide a much more coherent picture of the individual and what they are doing.

4iii26

Whatever This Is, We've Never Seen Anything Like It gizmodo

..."A supply chain risk designation is about excluding a company from bidding for certain contracts in the most highly sensitive DoD IT systems, not prohibiting other companies (even DoD contractors) from routine business dealings with the designated company," Brideman told Gizmodo.Part of the problem, however, is that we have no sign Hegseth has actually done that as of Wednesday, leading some to speculate there might still be room for a deal with Anthropic. But given the way President Donald Trump and the Pentagon are talking, nobody should be banking on that.

President Trump has spent his second term pushing the boundaries of what's considered legal, often declaring he'll do something unprecedented and leaving legal experts scratching their heads about whether it's even possible under existing law. That's where the Anthropic situation seems to be resting at the moment.

Anthropic CEO Dario Amodei laid out his company's reasons for not agreeing to the Pentagon's terms in a letter on Thursday. Hegseth had given Anthropic a deadline of 5:01 p.m. ET on Friday, and Amodei went to the public, making his case that AI should not be used for domestic surveillance because it's unethical, nor for fully autonomous weapons because the tech just isn't reliable enough yet.

The End of Apps: Why Autonomous AI is About to Delete 80% of Your Screen Shane Collins at Medium

...We are witnessing a brutal restructuring of software power dynamics. When an AI can bypass your screen, directly modify code, handle your Chase credit card bill, and navigate your Mac's system permissions, traditional apps suddenly look like expensive, obsolete roadblocks....In 2022, we marveled at chat windows. In 2025, we obsessed over compute efficiency. In 2026, the narrative has shifted entirely to execution.

Until now, AI acted like a highly educated consultant sitting in a browser tab. It gave you advice, but you had to do the heavy lifting. OpenClaw operates differently. It is a digital employee with "action rights." It lives directly on your local machine and takes over system-level operations.

Instead of acting as a static tool waiting for you to click a button, the agent directly accesses your local files, your contacts, and your browser. The value of digital assets is shifting overnight. We are no longer measuring software by how many features its code covers, but by a proxy's ability to successfully execute a human's intent.

...This marks a massive phase shift in how we use compute power. You no longer need to sit at a desktop. You simply send a voice note to your WhatsApp agent while walking down the street: "Pull my Uber receipts for the week, expense them in Confluence, and email my manager." The agent runs silently in the background, treating your desktop operating system as a quiet, invisible container.

...Giving an agent system-level access is walking into a minefield. The moment you give an AI the ability to read and write files, you expose yourself to severe prompt injection attacks.

...The most expensive asset in the future won't be raw computing power or lines of code. It will be the boundaries and instructions humans give to their agents. The applications are dying. The agents are here.

The "Data Center Rebellion" Is Here O'Reilly

...AI data centers function as grid-scale industrial loads. Individual projects now request 100+ megawatts, and some proposals reach into the gigawatt range. One proposed Michigan facility, for example, would consume 1.4 gigawatts, nearly exhausting the region's remaining 1.5 gigawatts of headroom and roughly matching the electricity needs of about a million homes. This happens because AI hardware is incredibly dense and uses a massive amount of electricity. It also runs constantly. Since AI work doesn't have "off" hours, power companies can't rely on the usual quiet periods they use to balance the rest of the grid.The politics come down to who pays the bill. Residents in many areas have seen their home utility rates jump by 25% or 30% after big data centers moved in, even though they were promised rates wouldn't change. People are afraid they will end up paying for the power company's new equipment. This happens when a utility builds massive substations just for one company, but the cost ends up being shared by everyone. When you add in state and local tax breaks, it gets even worse. Communities deal with all the downsides of the project, while the financial benefits are eaten away by tax breaks and credits

...This "data center rebellion" creates a strategic bottleneck that no amount of venture capital can easily bypass. While the US maintains a clear lead in high-end chips, we are hitting a wall on how we manage the mundane essentials like electricity and water. In the geopolitical race, the US has the chips, but China has the centralized command over infrastructure. Our democratic model requires transparency and public buy-in to function. If US companies keep relying on secret deals to push through expensive, overbuilt infrastructure, they risk a total collapse of community trust.

How Many People Does It Take to Kill a ChatGPT? Alberto Romero

...After OpenAI announced its Pentagon deal this past weekend, ChatGPT mobile app uninstalls in the US spiked 295%. Downloads dropped 13%. One-star reviews surged 775% in a single day; five-star reviews fell by half. The QuitGPT campaign—a grassroots initiative that started in January 2026—claims 2.5 million signatures. My entire X timeline is people urging others to cancel ChatGPT and switch to Claude.

5iii26

What Finnegans Wake Teaches Us about AI Paul Massari

Mapping out the latent spaces where machines learnLatent Spacecraft Nina Begus et al. (2026) at antikythera.org

...Finnegans Wake as latent cartography of language. Joyce's polyglot novel is read as a deliberately engineered linguistic map of latent space. Its nonce words and associative syntax mirror the continuous geometry by which neural models store semantic relations in vectors from the sound to the symbol. This part explores the boundary where signal becomes noise and meaning destabilizes, arguing that Joyce portrays the prenarrative stage of language, thus revealing its interiority.Finnegans Wake's speech-generating model, FinneGAN. We present a fiwGAN trained on Finnegans Wake audio, which generates speech enabled chiefly by Joyce's idiom in this novel. Automatic transcriptions oscillate between comprehensible English, Irish, and glossolalia, foregrounding the limits of comprehensibility and interpretability of the pre-speech phase. By probing the space of the novel further through its audio, we treat FinneGAN as a language-forming model with interpretative higher entropy than the novel it was trained on, shoving the language further into the pre-narration phase. Exploring FinneGAN's intermediate layers between the most abstract layer and the actual language makes the latent space interpretably navigable. A latent space is not narratable, and we are trying to make it navigable.

...Latent space is a common property of all existing deep neural network models and an intricate component of the images, words, sounds, and other outputs they produce. There is not a single latent space, but each model has its own, which evolves across successive versions.

...Latent spaces emerge from learned structuring. The mathematical form of latent spaces is a learned representation space whose actual layout is not scripted in advance. The algorithm discovers the geometry during the training process, based on the network's architecture, the optimization procedure, and—crucially—the statistical structure of training data. Once this geometry stabilizes, language can emerge from the latent space in the form of words, syntax, and coherence. A double emergence occurs: the latent space is algorithmically emergent from machine learning, and the cognitive-like capacities such as language are emergent from the latent space.

The Accidental Orchestrator Andrew Stellman at O'Reilly

...For the last two years I've been focused on AI, training developers to use these tools effectively, writing about what works and what doesn't in books, articles, and reports. And I kept running into the same problem: I had yet to find anyone with a coherent answer for how experienced developers should actually work with these tools. There are plenty of tips and plenty of hype but very little structure, and very little you could practice, teach, critique, or improve....I've been obsessed with Monte Carlo simulations ever since I was a kid. My dad's an epidemiologist—his whole career has been about finding patterns in messy population data, which means statistics was always part of our lives (and it also means that I learned SPSS at a very early age). When I was maybe 11 he told me about the drunken sailor problem: A sailor leaves a bar on a pier, taking a random step toward the water or toward his ship each time. Does he fall in or make it home? You can't know from any single run. But run the simulation a thousand times, and the pattern emerges from the noise. The individual outcome is random; the aggregate is predictable.

...Monte Carlo is a technique for problems you can't solve analytically: You simulate them hundreds or thousands of times and measure the aggregate results. Each individual run is random, but the statistics converge on the true answer as the sample size grows. It's one way we model everything from nuclear physics to financial risk to the spread of disease across populations.

6iii26

Future Shock Stephen Downes

I do think we have to think of generative AI this way: as a bicycle of the mind. Just like computers were when they came out. "It is a personal amplifier, not a generic one. It's my bicycle, and I'm the one riding it, going further because of the amplification. I still have to pedal! The bicycle goes in the direction I choose! But I'm going further and more efficiently than I could on foot."

Chinese Text Project augmented by AI translation Victor Mair at Language Log

The Chinese Text Project is an online open-access digital library that makes pre-modern Chinese texts available to readers and researchers all around the world. The site attempts to make use of the digital medium to explore new ways of interacting with these texts that are not possible in print. With over thirty thousand titles and more than five billion characters, the Chinese Text Project is also the largest database of pre-modern Chinese texts in existence....Translations of premodern Chinese texts generated using Artificial Intelligence have been added to ctext.org. This includes all texts in the pre-Qin and Han corpus that previously lacked translations, the 25 dynastic histories, as well as hundreds of other historical, literary, philosophical and poetic works. Translations will be added for other texts on an ongoing basis.

8iii26

Semantics, LLMs and Ontologies Dr Nicolas Figay at Medium

We are going through a period of intellectual turbulence. Under the banner of innovation, we are casually blending statistics (LLMs), structure (ontologies), and meaning (semantics) — three fundamentally distinct layers....we increasingly fall into a form of computational reductionism: the belief that by aligning technological building blocks — knowledge graphs, language models, document repositories — we will somehow "manage knowledge" across complex value chains.

- Semantics Is Not a Property of Data

...When an engine manufacturer, an MRO operator, an airline, and a regulator share a digital ecosystem, each operates within its own semantic registers, professional representations, and tacit ontologies. Attempting to "unify" them in a global graph resembles a form of intellectual Taylorism: transforming living thought into a static crystal....Semantic interoperability between ecosystem actors is as much a governance issue as it is a technological one. It requires negotiated conventions, arbitrations between stakeholders, and translation spaces — not merely automated ontological mappings.

- ...An LLM is probabilistic: it predicts token sequences based on statistical patterns.

An ontology is deterministic: it imposes binary logical constraints. Its value lies precisely in its rigor — but it becomes restrictive when used as a safeguard for a probabilistic system.

...Injecting a rigid ontology into an LLM deployed across a multi-actor ecosystem produces what might be called an augmented black box: a system that responds with structured confidence, wrapped in the official vocabulary of the organization, yet disconnected from the distributed practices of network participants.

...A lack of contextual understanding cannot be corrected through syntactic rules.

A digital ecosystem cannot be governed by token probabilities. It is co-constructed by humans negotiating meaning.

- ...Anthropomorphizing the machine — attributing understanding or reasoning to it — obscures a fundamental distinction: the machine executes correlations; the human assumes responsibility.

...In the extended enterprise, communities of practice, co-construction spaces, and inter-organizational feedback mechanisms are irreplaceable knowledge infrastructures. Automating them naively destroys their fundamental value: their capacity to generate new meaning.

- Four operational principles can help realign technology with collaboration:

- Ontologies as interoperability protocols, not shared truths... <

- LLMs as research and synthesis assistants, never decision-makers...

- Semantic heterogeneity as a resource, not a defect...

- Investment in distributed knowledge governance.

The engineer of digital collaboration in the future will not be the one who replaces experts with algorithms.

It will be the one who draws a clear boundary between the mechanical processing of information — automatable and scalable — and the social construction of knowledge — human, critical, and irreducibly distributed.

...If we allow machines to define our shared semantics, we risk losing our collective capacity to understand why systems work, to detect weak signals of emerging failure, and to improvise in the face of the unexpected.

The danger does not lie in an overly powerful AI.

It lies in misplaced trust that disarms our collective capacity for meaning.

LLMs see shadows. World models see reality. Enrique Dans at Medium

...Researchers with experience in modeling the world, who have been teaching machines to simulate realities, share my view that the next advance in AI will not come from scaling up LLMs, but from designing models capable of creating their own representation of the world.But let's start at the beginning: what is a world model? Imagine a mind that doesn't just repeat what it reads, and instead builds an internal simulator. That simulator receives sensations (images, sensors, text, interactions), learns implicit rules of physics, causality, and agency and can execute "counterfactual thoughts": if I push the glass, and it falls, does it break? A world model is not just a feature that predicts the next word; it is a machine that can generate possible futures and evaluate actions in those futures, within a compact and differentiable representation. The first academic works that formalized this idea are not new: in 2018, David Ha and Jürgen Schmidhuber wrote about training an agent "entirely inside of its own hallucinated dream generated by its world model", and transferring this policy back into the actual environment.

...LLMs are widely used because they are incredibly good at linguistic tasks and, above all, because their business is simple to capitalize on the idea of big data + big models = conversational products and APIs. But that equation has practical, energy, and conceptual limits: LLMs “see shadows”, texts that describe the world, and not the dynamics of the world itself, so they fail and hallucinate in physical reasoning, object persistence, agent modeling, and long-term plans. In contrast, a model of the world aspires to understand how the world changes when we act in it, and is therefore the natural tool for robotics, simulation, strategic planning and autonomous agents. If the AI of the future is to make confident decisions in the real world, it will need representation that captures continuity, causation, and the relationship between action and outcome.

From MIT Tech Review: How AI is turning the Iran conflict into theater

Welcome back to The Algorithm!"Anyone wanna host a get together in SF and pull this up on a 100 inch TV?"

The author of that post on X was referring to an online intelligence dashboard following the US-Israel strikes against Iran in real time. Built by two people from the venture capital firm Andreessen Horowitz, it combines open-source data like satellite imagery and ship tracking with a chat function, news feeds, and links to prediction markets, where people can bet on things like who Iran's next "supreme leader" will be (the recent selection of Mojtaba Khamenei left some bettors with a payout).

I've reviewed over a dozen other dashboards like this in the last week. Many were apparently “vibe-coded” in a couple of days with the help of AI tools, including one that got the attention of a founder of the intelligence giant Palantir, the platform through which the US military is accessing AI models like Claude during the war. Some were built before the conflict in Iran, but nearly all of them are being advertised by their creators as a way to beat the slow and ineffective media by getting straight to the truth of what's happening on the ground. “Just learned more in 30 seconds watching this map than reading or watching any major news network,” one commenter wrote on LinkedIn, responding to a visualization of Iran's airspace being shut down before the strikes.

...Much of the spotlight on AI and the Iran conflict has rightfully been on the role that models like Claude might be playing in helping the US military make decisions about where to strike. But these intelligence dashboards and the ecosystem surrounding them reflect a new role that AI is playing in wartime: mediating information, often for the worse.

There's a confluence of factors at play. AI coding tools mean people don't need much technical skill to assemble open-source intelligence anymore, and chatbots can offer fast, if dubious, analysis of it. The rise in fake content leaves observers of the war wanting the sort of raw, accurate analysis normally accessible only to intelligence agencies. Demand for these dashboards is also driven by real-time prediction markets that promise financial rewards to anyone sufficiently informed. And the fact that the US military is using Anthropic's Claude in the conflict (despite its designation as a supply chain risk) has signaled to observers that AI is the intelligence tool the pros use. Together, these trends are creating a new kind of AI-enabled wartime circus that can distort the flow of information as much as it clarifies it.

10iii26

MIT Techscape

The US-Israel war on Iran shows that datacenters are a new frontier in warfareIran is bombing datacenters in the Persian Gulf to blow up symbols of the Gulf states' technological alliance with the United States. Added bonus: they will be extremely costly to rebuild, being among the most expensive buildings in history. My colleague Daniel Boffey reports:

It is believed to be a first: the deliberate targeting of a commercial datacenter by the armed forces of a country at war.

At 4.30am on Sunday morning, an Iranian Shahed 136 drone struck an Amazon Web Services datacenter in the United Arab Emirates, setting off a devastating fire and forcing a shutdown of the power supply. Further damage was inflicted as attempts were made to suppress the flames with water.

Soon after, a second datacenter owned by the US tech company was hit. Then a third was said to be in trouble, this time in Bahrain, after an Iranian suicide drone turned to fireball on striking land nearby.

Iranian state TV has claimed that Iran's Islamic Revolutionary Guard Corps launched the attack "to identify the role of these centers in supporting the enemy's military and intelligence activities".

The coordinated strike had an immediate impact. Millions of people in Dubai and Abu Dhabi woke up on Monday unable to pay for a taxi, order a food delivery or check their bank balance on their mobile apps.

Whether there was a military impact is unclear — but the strikes swiftly brought the war directly into the lives of 11 million people in the UAE, nine out of 10 of whom are foreign nationals. Amazon has advised its clients to secure their data away from the region.

The first apes to walk upright may have evolved in Europe New Scientist

A single femur found in Bulgaria appears to represent an ape or early hominin that walked on two legs before any known African hominin, but the evidence is far from conclusive

How an intern helped build the AI that shook the world New Scientist

...When I joined, there was another little team at DeepMind that I was going to work with, which was Aja Huang and David Silver, that had started working on Go. It was basically my charge to start building the neural networks. It was a dream. There were a bunch of different approaches that we tried, and a lot of the initial things we tried failed. Eventually, I just got frustrated and tried the dumbest, simplest thing, which was to try to predict the next move that an expert would make in a given board position, training a neural network on a big corpus of expert games. And that turned out to be the approach that really got us off the ground. By the end of the summer, we hosted a little match with DeepMind's Thore Graepel, who considered himself a decent Go player, and my networks beat him. DeepMind then started to be convinced that this was going to be a real thing and started putting resources towards it and building a big team around it.

Meta is buying MOltbook lifehacker

The AI Productivity Trap: 3 Silent Shifts Rewriting Economics, Truth, and Reality Shane Collins on Medium

...When OpenAI released ChatGPT, our immediate instinct was to force this god-like technology into a text box, making it act like a human assistant. This is a massive failure of imagination. We are looking at artificial intelligence through the lens of a dead world. We view it with the same naive excitement that 19th-century Europeans felt when they first saw a steam locomotive — we marvel at the smoke and speed, completely failing to grasp how the tracks will rewrite the entire map of human civilization.We are treating AI like a simple software update. In reality, it is a complete restructuring of human power.

Will AI Accelerate or Undermine the Way Humans Have Always Innovated? The Conversation U.S. aat Medium

...The wheel may have emerged from copper-mining communities. One expert sourced copper from the Balkans, another transported it, another smelted it. By about 4000 B.C., additional specialists cast copper into an early wheel-shaped amulet: shaping a wax model, encasing it in clay, firing it in a kiln, pouring molten metal into the mold, then breaking the mold away.a Transport technologies reshaped ancient product networks. As communities across Eurasia and Africa built wheeled vehicles and ships, and raised domesticated horses and other pack animals, collaboration expanded across continents. Maritime and overland trade linked blacksmiths, scribes, religious scholars, bead makers, silk weavers, and tattoo artists.

The Plot Against Intelligence, Human and Artificial Paul Krugmand

...There's no mystery about the motivation for banning Claude. Anthropic has said that it wants assurances that its products won't be used for fully autonomous weapons or mass surveillance of Americans. This has enraged Trump officials: David Sacks, the administration's AI and crypto czar, has accused the company of supporting "woke AI." So an administration for which seeking vengeance against perceived enemies is a central motivation is naturally trying to punish Anthropic and damage its business.But the fact that the Trumpist-Anthropic feud is understandable doesn't make it normal or acceptable. In fact, the designation of Anthropic as a supply chain risk is a terrible omen for America's future, in at least three ways.

First, it's obviously illegal. Designating a potential contractor a supply-chain risk isn't something the government is supposed to do casually. The legal basis for such a designation, embodied in the federal government's acquisition guidelines, is very specific:

"Supply chain risk" means the risk that an adversary may sabotage, maliciously introduce unwanted function, or otherwise subvert the design, integrity, manufacturing, production, distribution, installation, operation, or maintenance of a covered system so as to surveil, deny, disrupt, or otherwise degrade the function, use, or operation of such system (see 10 U.S.C. 3252).So supply chain risk is about sabotage or subversion. This company is too woke" doesn't meet that definition.Second, denying government contracts to a company because the administration doesn't like that company's politics is a seriously corrupt practice. Think of it as the flip side of crony capitalism: while throwing taxpayer dollars at companies it considers friends — especially because they personally enrich members of the administration or the president's family — the administration is freezing out companies it considers enemies. If this practice becomes the norm, as it surely will if these people remain in power, it will waste money because the government is denying contracts to vendors who offer the best value but aren't sufficiently MAGA. It will also further corrupt our politics, as businesses feel the need to be demonstratively pro-Trump if they want federal contracts.

Finally, the Defense Department is now doing exactly what people like Hegseth have always accused supporters of DEI of doing — refusing to hire the best people for the job, refusing to give contracts to the best suppliers, in the name of political correctness. The Pentagon's managers and tech experts clearly believe that Claude is the best tool for many purposes, but they have been ordered not to use it because their political masters don't like the company's politics.

Fast Paths and Slow Paths Varun Raj at O'Reilly

...As AI systems scale beyond isolated pilots into continuously operating multi-agent environments, universal mediation becomes not just expensive but structurally incompatible with autonomy itself. The challenge is not choosing between control and freedom. It is learning how to apply control selectively, without destroying the very properties that make autonomous systems useful.This article examines how that balance is actually achieved in production systems—not by governing every step but by distinguishing fast paths from slow paths and by treating governance as a feedback problem rather than an approval workflow.

The first generation of enterprise AI systems was largely advisory. Models produced recommendations, summaries, or classifications that humans reviewed before acting. In that context, governance could remain slow, manual, and episodic.

That assumption no longer holds. Modern agentic systems decompose tasks, invoke tools, retrieve data, and coordinate actions continuously. Decisions are no longer discrete events; they are part of an ongoing execution loop. When governance is framed as something that must approve every step, architectures quickly drift toward brittle designs where autonomy exists in theory but is throttled in practice.

The critical mistake is treating governance as a synchronous gate rather than a regulatory mechanism. Once every reasoning step must be approved, the system either becomes unusably slow or teams quietly bypass controls to keep things running. Neither outcome produces safety.

The real question is not whether systems should be governed but which decisions actually require synchronous control—and which do not.

New Kinds of Applications Mike Loukides at O'Reilly

...I really wouldn't have expected to see a social network for agents. I'm among the many people who don't really understand what a network like Moltbook means. Watching it is something of a spectator sport. It's easy for a human to "impersonate" an agent, though I suspect such impersonation is relatively rare. I also suspect (but obviously can't prove) that most of the posts reflect agents' responses to prompts from their "humans." Or are Moltbook posts truly AI-native? How would we know? (Yeah, you can tell AI output because it has too many em dashes. That's nonsense. AIs overuse em dashes because humans overuse em dashes. Guilty as charged. Trying to change.) Moltbook doesn't demonstrate some kind of native AI intelligence, though it's fun to pretend that it does. Agents, if they're indeed acting on their own, are just reflecting the behavior of humans on Reddit and other social media. The timer that wakes them up periodically is both clever and a demonstration that, whatever else they may be, agents are human creations that act under our control. They do nothing of their own volition. To think otherwise is to confuse the bird in a cuckoo clock with an actual bird, as Fred Benenson has put it. However, BS about AGI aside, Moltbook is a fantastically clever app that I, at least, wouldn't have imagined. Even if Moltbook was only created because it can now be built for relatively little effort—that is important in itself. We're all writing software we wouldn't have bothered with a year ago.

What OpenClaw Reveals About the Next Phase of AI Agents O'Reilly

AI "journalists" prove that media bosses don't give a shit Cory Doctorow

...Rawdogging a Yahoo News article means fighting through a forest of pop-ups, pop-unders, autoplay video, interrupters, consent screens, modal dialogs, modeless dialogs – a blizzard of news-obscuring crapware that oozes contempt for the material it befogs. Irrespective of the words and icons displayed in these DOM objects, they all carry the same message: "The news on this page does not matter."The owners of news services view the news as a necessary evil. They aren't a news organization: they are an annoying pop-up and cookie-setting factory with an inconvenient, vestigial news entity attached to it. News exists on sufferance, and if it was possible to do away with it altogether, the owners would.

That turns out to be the defining characteristic of work that is turned over to AI. Think of the rapid replacement of customer service call centers with AI. Long before companies shifted their customer service to AI chatbots, they shifted the work to overseas call centers where workers were prohibited from diverging from a script that made it all but impossible to resolve your problems:

https://pluralistic.net/2025/08/06/unmerchantable-substitute-goods/#customer-disservice

These companies didn't want to do customer service in the first place, so they sent the work to India. Then, once it became possible to replace Indian call center workers who weren't allowed to solve your problems with chatbots that couldn't resolve your problems, they fired the Indian call center workers and replaced them with chatbots. Ironically, many of these chatbots turn out to be call center workers pretending to be chatbots (as the Indian tech joke goes, "AI stands for 'Absent Indians'"):

https://pluralistic.net/2024/01/29/pay-no-attention/#to-the-little-man-behind-the-curtain

"We used an AI to do this" is increasingly a way of saying, "We didn't want to do this in the first place and we don't care if it's done well." That's why DOGE replaced the call center reps at US Customs and Immigration with a chatbot that tells you to read a PDF and then disconnects the call:

https://pluralistic.net/2026/02/06/doge-ball/#n-600

The Trump administration doesn't want to hear from immigrants who are trying to file their bewildering paperwork correctly. Incorrect immigration paperwork is a feature, not a bug, since it can be refined into a pretext to kidnap someone, imprison them in a gulag long enough to line the pockets of a Beltway Bandit with a no-bid contract to operate an onshore black site, and then deport them to a country they have no connection with, generating a fat payout for another Beltway Bandit with the no-bid contract to fly kidnapped migrants to distant hellholes.

If the purpose of a customer service department is to tell people to go fuck themselves, then a chatbot is obviously the most efficient way of delivering the service. It's not just that a chatbot charges less to tell people to go fuck themselves than a human being — the chatbot itself means "go fuck yourself." A chatbot is basically a "go fuck yourself" emoji. Perhaps this is why every AI icon looks like a butthole

When AI remembers everything and organisations forget how to choose Doug Belshaw

This is the third in a series of posts using stories from Jorge Luis Borges' collection Labyrinths to examine how AI is reshaping decision-making in mission-driven organisations. My first post explored how AI systems become inescapable in The (AI) lottery is already running. The second looked at how they can narrow our choices before we know we are choosing in When AI tools give you choices. This third post comes at things from a different angle: what happens when the tools give us everything we asked for — and it turns out that everything is... too much?(notes Dymaxion Chronofile of Buckminster Fuller)

13iii26

From Systems Thinking to Component Architecture: A Forgotten Evolution Dr Nicolas Figay at Medium

...If we don't design for emergence deliberately (through proper decoupling, clear interfaces, and event-driven communication), we get accidental emergence — also known as bugs, unpredictable failures, and production incidents.

Three more AI psychoses Cory Doctorow

...Think of how often you noticed "42" after reading Hitchhiker's Guide to the Galaxy, or how many times "6-7" crops up once you've experienced a baseline of exposure to adolescents. Now imagine that an obsequious tale-spinner was sitting at your elbow, helpfully noting these coincidences and fitting them into a folie-a-deux mystery play that projected a grand, paranoid narrative onto the world. Every bit of confirming evidence is lovingly cataloged, all disconfirming evidence is discounted or ignored. It's fully automated luxury QAnon — a self-baking conspiracy that harnesses an AI in service to driving you deeper and deeper into madness: That's the original "AI psychosis" that the term was coined to describe....Let's start with the investors' delusion. AI started as an investment project from the usual suspects: venture capitalists, private wealth funds, and tech monopolists with large cash reserves and ready access to loans during the cheap credit bubble. These entities are accustomed to making large, long-shot bets, and they were extremely motivated to find new markets to grow into and take over. Growing companies need to keep growing, but not because they have "the ideology of a tumor." Growing companies' imperative to keep growing isn't ideological at all — it's material. Growth companies' stock trade at a high multiple of their "price to earnings ratio" (PE ratio), which means that they can use their stock like money when buying other companies and hiring key employees. But once those companies' growth slows down, investors revalue those shares at a much lower PE multiplier, which makes individual executives at the company (who are primarily paid in stock) personally much poorer, prompting their departure, while simultaneously kneecapping the company's ability to grow through acquisition and hiring, because a company with a falling share price has to buy things with cash, not stock. Companies can make more of their own stock on demand, simply by typing zeroes into a spreadsheet — but they can only get cash by convincing a customer, creditor or investor to part with some of their own:

...There's a different advantage to confining your concerns to imaginary things: imaginary things don't exist, so they don't contest your public statements about them, nor do they make demands on you. Think of how the right concerns itself with imaginary children (unborn babies, children in Wayfair furniture; children in nonexistent pizza parlor basements, children undergoing gender confirmation surgery). These are very convenient children to advocate for, since, unlike real children (hungry children, children killed in the Gaza genocide, children whose parents have been kidnapped by ICE, children whom Matt Goetz and Donald Trump trafficked for sex, children in cages at the US border, trans kids driven to self-harm and suicide after being denied care), nonexistent children don't want anything from you and they never make public pronouncements about whether you have their best interests at heart.

Artificial intelligence is just underpaid human labor boingboing

Learning to Navigate the Uncertain Future with AI H-Corps

John Seely Brown: a genuinely hopeful idea: that AI, approached thoughtfully, could make us sharper learners and more attuned to the world around us. The opportunity is in asking better questions, reading context more deeply, and learning alongside each other in ways that weren't possible before.

Weekly Top Picks Alberto Romero

THE WEEK IN AI AT A GLANCE

- Money & Business: More and more top engineers and developers are leaving xAI as Grok falls behind ChatGPT, Claude, and Gemini. Geopolitics: Claude was used to select targets in the Iran strikes. One of them was a girls' school.

- Work & Workers: The question isn't whether AI will automate your job but whether AI will make your job irrelevant.

- Products & Capabilities: Meta delays its new flagship model after it fails to match the frontier. Meanwhile, the top three labs reach escape velocity.

- Trust & Safety: A one-man ethical hacking outfit breached McKinsey's AI platform in two hours, which says it all about the safety of AI.

- Culture & Society: People really hate AI. The polls confirm it.

- Philosophy: A NYT quiz went viral. People prefer AI writing, but the reason comes down to the thin line between belief and value.

...Musk is known for successfully restructuring entire teams, so that's not necessarily the problem here (as Peter Thiel once said, you don't bet against Elon); the issue is something else that, unfortunately, not even Musk can deal with: Grok is behind on coding. "Oh, ok, that's why they hired a couple of heavyweights from Cursor, right?" Sure, but the problem is that being behind in coding now means that you don't have good enough AI models to build your next generation of models. xAI has fallen behind Anthropic, OpenAI, and Google DeepMind at the worst possible moment.

...The Wall Street Journal reported that the US Central Command used Anthropic's Claude for intelligence assessments, target identification, and simulating battle scenarios during the strikes on Iran. The Washington Post reported that Claude is embedded in Palantir's Maven Smart System, which suggested hundreds of targets, issued precise coordinates, and prioritized them by importance, enabling the US military to strike over 1,000 targets in the first 24 hours. What had previously been weeks-long battle planning became, in the Post's sourcing, real-time operations.

...The ATM tried to do the teller's job, but it was actually the iPhone that made the teller's job pointless. Companies are mostly doing ATM thinking with AI. They're slotting it into existing workflows, enhancing existing tasks, etc., and finding that it mostly doesn't work that well. You can't fit capital into "labor-shaped holes," to use [David] Oks expression.

A world made by and for humans will find a way to need humans; at most, you move the bottlenecks around while keeping progress advancing at the speed of meat.

...Musk tried to brute-force his way to the frontier with compute and speed; Zuckerberg tried to buy his way there with capital and acquisitions. Neither approach worked, and both companies are now reorganizing in the aftermath. The reason is the same: Anthropic, OpenAI, and Google DeepMind have entered recursive self-improvement loops, and that flywheel compounds faster than any amount of money or manpower can match from the outside.

We might not be near AGI—who knows—but recursive self-improvement is like escape velocity. You either reach it or you will be hitting the ground with a big crash.

Anthropic and Donald Trump's Dangerous Alignment Problem Gideon Lewis-Kraus at The New Yorker

...Intelligence contractors, like Palantir, offer platforms that synthesize, process, and surface decision-relevant information. Palantir's workflow includes an integrated suite of A.I. models selected from a drop-down menu. As one Palantir employee told me, "Claude is just the best, by far." A human analyst might review signal intelligence to select military targets; Claude can do the same thing, only much faster and more efficiently.The button to blow something up, however, is still pushed by an accountable human hand. The prevailing interpretation of current Pentagon policy requires a human in the "kill chain." Claude, as far as Amodei was concerned, was in any case not ready for unsupervised combat operations. But it eventually would be unignorably powerful. At that point, Amodei reasoned, the government might even nationalize A.I. by hook or by crook. Amodei hoped that his early decision to enlist Claude in active duty would put him in a position to influence future terms of engagement—not only to satisfy his own conscience but to set an industry precedent. Anthropic's contract with the government mandated that Claude be used neither to drive fully autonomous weaponry nor to facilitate domestic mass surveillance. The Pentagon accepted these stipulations.

...At some point this past fall, Hegseth's under-secretary for research and engineering, the former Uber executive Emil Michael, reviewed the Pentagon's arrangement with Anthropic and was dismayed to find that Claude could not be deployed according to the government's every whim. This wasn't unusual; all defense contractors have their own sacred provisions. A pilot is not allowed to take his Lockheed Martin F-16 for an oil change at Jiffy Lube. But Michael assessed Anthropic's terms as both restrictive and sanctimonious. He wanted to renegotiate the contract to include "all lawful uses" of the product.

16iii26

A Fraudster's Paradise O'Reilly

17iii26

Shameless Guesses, Not Hallucinations Astral Codex Ten

...the interesting question isn't why AIs hallucinate: during training, guessing correctly is rewarded, guessing incorrectly isn't punished, so the rational strategy is to always guess (and increase your chance of being right from 0 to 0.001%). Since AIs in normal consumer use follow the strategies they learned during training, they guess there too. The interesting question is why AIs sometimes don't hallucinate. Here the answer is that the AI starts out hallucinating 100% of the time, the AI companies do things during post-training to bring that number down, and eventually they reduce it to "acceptable levels and release it to users.How do we know this is what's happening? When researchers observe an AI mid-hallucination, they see the model activates features related to deception — ie fails an AI lie detector test. The original title of this post was "Lies, Not Hallucinations" and I still like this framing — the AI knows what it's doing, in the same way you'd know you were trying to pull one over on your teacher by writing a fake essay. But friends talked me out of the lie framing. The AI doesn't have a better answer than "John Smith". It's giving its real best guess - while knowing that the chance it's right is very small.

Why does this matter? I often see people in the stochastic parrot faction say that AIs can't be doing anything like humans, because they have this bizarre inhuman failure mode, "hallucinations" which is incompatible with being a normal mind that has some idea what's going on. Therefore, it must be some kind of blind pattern-matching algorithm. Calling them "shameless guesses" hammers in that the AI is doing something so human and natural that you probably did it yourself during your student days.

Understood correctly, this is a story about alignment. AIs are smart enough to understand the game they're actually playing — the game of determining strategies that get reward during pretraining. We just haven't figured out how to align their reward function (get a high score on the pretraining algorithm) with our own desires (provide useful advice). People will say with a straight face "I don't worry about alignment because I've never seen any alignment failures ... and also, all those crazy hallucinations prove AIs are too dumb to be dangerous".

Analyze your games with our advanced AI for free and take your Go to the next level.

AI is rewiring how the world's best Go players think Michelle Kim at MIT Technology Review

...Ten years ago AlphaGo, Google DeepMind's AI program, stunned the world by defeating the South Korean Go player Lee Sedol. And in the years since, AI has upended the game. It's overturned centuries-old principles about the best moves and introduced entirely new ones. Players now train to replicate AI's moves as closely as they can rather than inventing their own, even when the machine's thinking remains mysterious to them. Today, it is essentially impossible to compete professionally without using AI. Some say the technology has drained the game of its creativity, while others think there is still room for human invention. Meanwhile, AI is democratizing access to training, and more female players are climbing the ranks as a result....Go is an abstract strategy board game invented in China more than 2,500 years ago. Two players take turns placing black and white stones on a 19x19 grid, aiming to conquer territory by surrounding their opponent's stones. It's a game of striking mathematical complexity. The number of possible board configurations—roughly 10170—dwarfs the number of atoms in the universe. If chess is a battle, Go is a war. You suffocate your enemy in one corner while fending off an invasion in another.

...Today, KataGo is the program most widely used by professional Go players in South Korea. It's faster and sharper than AlphaGo. It's learned to predict not just who will win, but also who owns each point on the board at any given moment. While AlphaGo Zero pieced together its understanding of the board by looking at small sections, KataGo learned to read the whole board, developing better judgment for long-term strategies. Instead of just learning how to win, it learned to maximize its score.

The software has reshaped how people play. For hundreds of years, professional Go players have navigated the game's astronomical complexity by developing heuristics that replaced brute calculation. Elegant opening strategies imposed abstract order on the empty grid. Invading corners early was a bad bargain. Each generation of Go players added new principles to the canon.

...The starkest shift has been in opening moves. Go starts on a blank grid, and the first 50 moves were canvases for abstract thinking and creativity, where players etched their personalities and philosophies. Lee Sedol fashioned provocative moves that invited chaos. Ke Jie, a Chinese player who was defeated by AlphaGo Master in 2017, dazzled with agile, imaginative moves. Now, players memorize the same strain of efficient, calculated opening moves suggested by AI. The crux of the game has shifted to the middle moves, where raw calculation matters more than creativity.

...Over a third of moves by the top Go players replicate AI's recommendations, according to a study in 2023. The first 50 moves of each game are often identical to what AI suggests, many players say.

"Go has become a mind sport," says Lee Sedol, who retired three years after his 2016 defeat to AlphaGo. "Before AI, we sought something greater. I learned Go as an art," he says. "But if you copy your moves from an answer key, that's no longer art."

Playing Go is no longer about charting new frontiers, some players say, but about following the dictates of a superhuman oracle. "I used to inspire fans by advancing the techniques of Go and presenting a new paradigm, says Lee. "My reason for playing Go has vanished."

...As she leaned close to her monitor, her blinking screen showed the winning probabilities of each move, with no explanations. Even top players like Kim and Shin don't understand all of AI's moves. "It seems like it's thinking in a higher dimension, she says. When she tries to learn from AI, she adds, "it's less about rationally thinking through each move, but more about developing a gut feeling—an intuition."

..."Top-tier players haven't yet been able to deduce the general principles behind AI moves," says Nam Chi-hyung, a Go professor at Myongji University. Although they can emulate AI's moves, they have yet to glean a new paradigm for the game because its reasoning is a black box, she says. Go may be in an epistemic limbo.

Players can mimic AI's opening moves, but in the middle game—where the board branches into too many possibilities to memorize—their own judgment takes over. Fans revel in watching players make mistakes and mount comebacks, exuding personality in every stone on the board.

...To Shin, AI is a teacher, a companion, and a North Star. "I may be one of the strongest human players, but with AI around, I can't be so arrogant," he says. "AI gives me a reason to keep improving."

19iii26

OpenAI's Dead Alberto Romero

...This movement against OpenAI won't kill ChatGPT's lead, but it'll deteriorate its image beyond repair; the market might not care about morals, but it does care about optics....There was one who trusted Altman early on. By the end of 2024, Microsoft had invested $13 billion in OpenAI. It owns 27% of the company. Until 2032, it will get 20% of revenue and exclusive rights to route all access to OpenAI's stateless models (no memory between sessions) through Azure. Last month, OpenAI signed a $50 billion deal with Amazon as part of a multi-deal that included Microsoft. That would make AWS the exclusive third-party cloud provider for Frontier, its new enterprise agent platform, equipped with a stateful API.

...To compete with Anthropic in enterprise, OpenAI needs Amazon's distribution. To keep its infrastructure funded, OpenAI needs Azure credits. These two needs are, barring a thin semantic lifeline, mutually exclusive.

OpenAI would need neither if it built its own datacenter; alas, it won't. In January 2025, Trump stood in the White House alongside Altman, Masayoshi Son, and Larry Ellison to announce Stargate, a $500 billion AI data center initiative. They promised to spend $100 billion immediately. More than a year later, the Stargate joint venture is over: there's no staff, it is not developing any datacenters, and the partners have spent months arguing over who would control what without consensus. OpenAI missed its target of 10 GW of capacity by the end of 2025. It then tried to build its own data centers but couldn't get financing; lenders wouldn't back billion-dollar infrastructure projects from a company that has never turned a profit.

...OpenAI seems to be adding enemies faster than customers. Elon Musk is suing OpenAI and Microsoft for up to $134 billion. The trial starts in late April 2026. Musk argues he gave $38 million in seed funding—60% of OpenAI's early capital—and received nothing when the company restructured into a for-profit. In January, a federal judge rejected OpenAI and Microsoft's final attempt to dismiss the case, pointing to internal communications from 2017 in which co-founder Greg Brockman privately wrote that he "cannot say that we are committed to the non-profit." If a jury finds that Altman's team knowingly misled donors, the company could be ordered to pay billions it does not have, in the middle of a cash crisis, on the eve of an IPO that is supposed to solve the cash crisis. Even if OpenAI wins, the reputational damage of saying one thing and doing the opposite may never fade away.

...In February, the US and Israel launched military strikes against Iran. Iranian retaliatory attacks hit three AWS data centers in the UAE and Bahrain. The first military strikes on US hyperscaler infrastructure in history. The Strait of Hormuz closed. Twenty percent of the world's oil transits through that strait. Qatar, which produces over a third of the world's helium—essential for chip manufacturing—is in missile range. Tech companies had poured billions into Middle East data centers drawn by cheap energy; those investments are now in danger. Every AI company is exposed, but OpenAI is more exposed than any other because its compute needs are massive and it has hundreds of millions of users across the globe who all need to be served. The US might win the war, but OpenAI has already lost it.

...The bubble is general, but OpenAI could end up worse than anyone else. They wanted to become "too big to fail" by entrenching themselves within the broader economy, but OpenAI might actually be too big to save. You can't let an entire industry succumb, but with Anthropic and Google, OpenAI is not that critical a piece.

...They made a big bet at the inception, a bet on a technological revolution called AGI. They poured their souls, their money, and our data into it, but even with all that—all the popularity, the growth, the revenue, the religious-like following—they are about to find out that it's ultimately better to be good than to be first.

22iii26

The 5 Year Warning: Why the World You Know is About to Become Unrecognizable Vishal Rajput at Medium

...We are currently living in the February 2020 of artificial intelligence. While many still view AI as a niche curiosity for the tech sector or a tool for drafting emails, we are actually standing at a civilizational inflection point. This is not a gradual trend but a fundamental shift in how our society functions. The disruption is no longer a speculative threat for the next generation; it is an imminent reality that will transform every industry before we have time to brace for impact....Higher education is currently standing on a fault line. For decades, a college degree was the gold standard for proving "human capital formation" and conscientiousness. That era is ending. AI can now act as a superior assessment tool, evaluating specific capabilities at scale through unique, real time tests that far exceed the predictive power of a diploma.

The consequences for the next generation are stark. The CEO of Anthropic recently predicted that AI will wipe out a staggering 50 percent of all white collar entry level jobs within the next one to five years. For those navigating this new landscape, the strategy must shift

You may still be a skeptic at dinner parties, claiming you "don't use AI," but the reality is that AI is already using you. It is the invisible architect of your life, determining your Netflix recommendations, your social media feed, your credit scores, and even the price of your apartment. Algorithms are already the silent arbiters of your economic reality.

To move from being a passive subject to an active participant, you must integrate these tools into your own cognitive workflow. For example, using generative AI as a personal tutor asking it to "explain this to me like I'm a five year old" is a powerful way to demystify complex technical shifts and stay relevant in an information economy that is moving at warp speed.

...The five year warning is not a prediction for a distant era. It is a reality that is unfolding now. We are rapidly approaching a horizon where cognitive labor is no longer the primary currency of human value. As the exponential curve of technology outpaces our ability to craft policy, the social and economic structures we rely on will face an existential test.

This shift is arriving sooner than most people realize. It forces us to confront a thought provoking question that goes to the heart of the human experience. How will you define your worth and your purpose in a world where your work is no longer required?

AI terminology of the week, circa Mar 2026 Bruce Sterling Terms Related to AI and Agents (a long list)

24iii26

CROSSPOST: Paul Ford: The A.I. Disruption We've Been Waiting for Has Arrived Brad DeLong

...what is happening now is that, as we move into the attention info-bio tech economy proper, the skilled white-collar information-processing jobs move under the bullseye. A surprisingly large number of them now appear to involve lots of tasks that are not manipulating under a short set of fixed rules but rather manipulating in ways that turn out to have surprisingly low Kolmogorov complexity. Those are being utterly transformed even without the coming of anything that anyone other than a grifting hypester would label "Artificial General Intelligence". And, as those who know how to do them well become 10x as productive and those who can learn barely enough how to do them at all become good enough to cobble along, some of these job categories greatly shrink and those in or planning to be in them need to find other things to do, while others substantially expand in number and create potential gold rushes—all depending on which side of the demand-elasticity Jevons's-Paradox canyon-gulf they land on.

RSI is the Hottest Thing in AI. Real or Ruse?“ Ignacio de Gregorio at Medium

If you any AI enthusiast about what they are most excited about, the overwhelming answer right now is 'RSI', standing for 'Recursive Self Improvement'.Or, in plain English: using AIs to improve themselves.

Imagine... an AI that belonged to everyone Enrique Dans at Mediums

How to Build a General-Purpose AI Agent in 131 Lines of Python O'Reilly

25iii26

ON1 Restore AI Turns Old Family Photos into Grotesque Nightmare Fuel Jaron Schneider at PetaPixeld

"Restore AI automatically repairs damaged photos, restores faded colors, enhances lost detail, and can even colorize black-and-white images—making it easier to recover photographs that time has worn down."

...Despite what ON1 says, what the tool appears to be doing is simply having an AI model redraw the input image as best as it can, and that means original details are either lost or completely changed.

Palantir CEO Says Only the Neurodivergent Will Survive the AI Takeover

It's getting weird, man. AJ Dellinger at gizmodo

26iii26

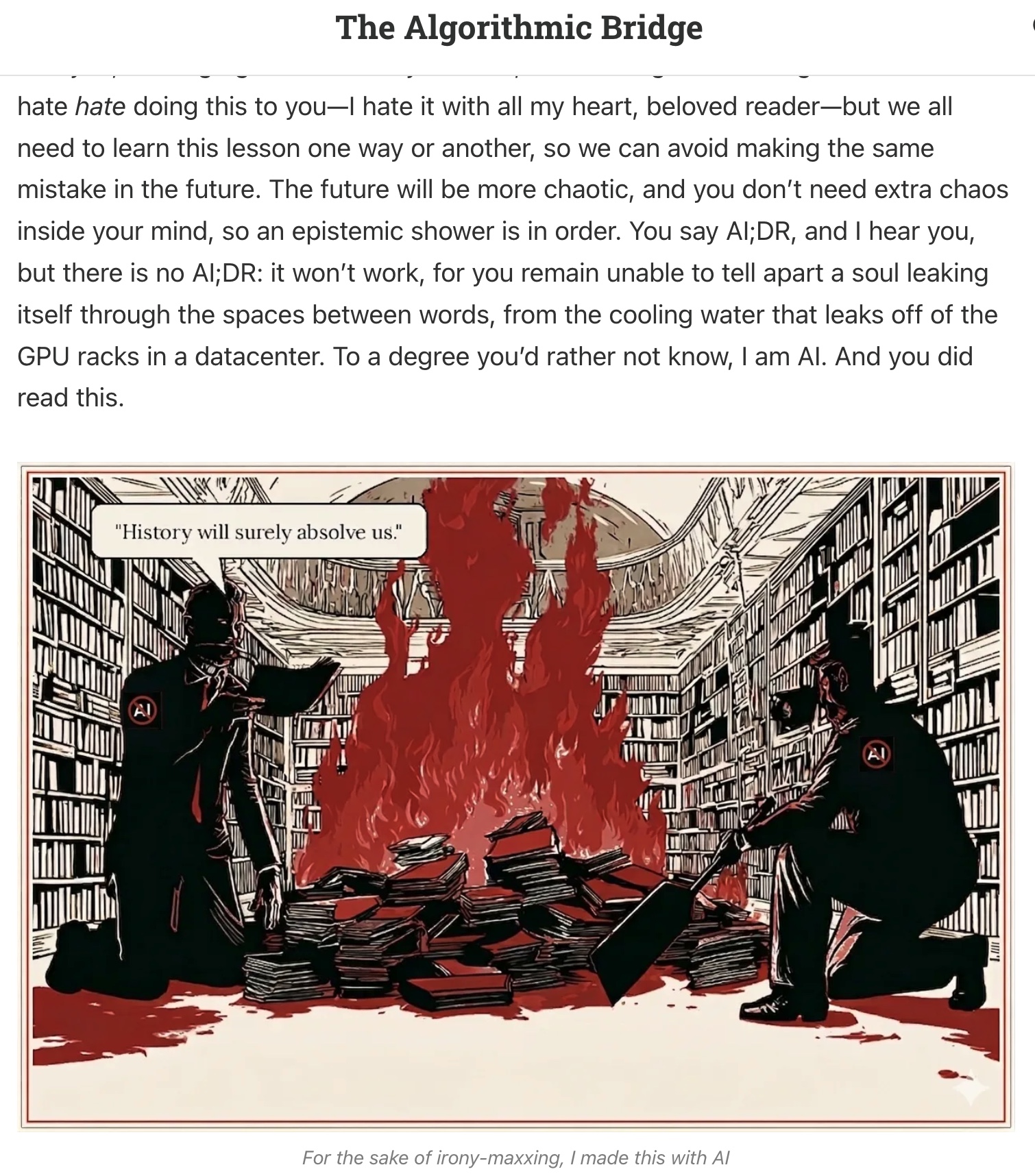

It's AI, so I Didn't Read Alberto Romero

I find the acronym poetic in the way only minimalist poetry can be, because it manages to compress into five characters not one but two civilizational shifts: one is that we have gone from a world where the obstacle to reading was the length of the text to one where the obstacle is the suspicion of a lack of human involvement, which is to say we've gone from "I won't finish that" to "no one started that."x The former assumes one is responsible for one's limitations, whereas the latter urges one to externalize one's responsibility.

...the instinct of AI;DR is profoundly reasonable: if some human text resembles AI style enough to be mistaken, then it probably doesn't deserve your time anyway, right? By virtue of existing, AI has set a threshold we should have set to ourselves a long time ago; "garbage in, garbage out"s doesn't apply only to bots! Or, to use the modern coinage: slop is slop whether it's made of silicon or carbon.

...The only useful heuristic at your disposal is this: if you read anything that came out after 2023-2024, the chances are high it contains a healthy dose of AI slop. I am really sorry that is the best I can offer you. It's no consolation. However, you can use it to go back to the classics.

...We are at a crossroads in terms of the relationship between a text and the reality it encodes; the greatest fiction is just like reality. I say more: if you aim at capturing the truth, you may have no chance but tap into the realms of the imaginary. There's no reality that can be understood—or withstood—without fiction. Such is the weirdness that awaits us after the transition. But we are also at a crossroads in terms of the relationship between a text and its origin. The Author, as that entity that signs by name, has been dead for a while—at least since Barthes called his demise—but it's a more serious matter to realize that it was never alive.

...So we're back to the pre-modern, pre-printing press society when scribes were not authors but copyists, or even back to the oral tradition, when anonymous bards sang songs from city to city—inadvertently building the entire cultural infrastructure and scaffolding of modernity—in an exercise of collective selfless authorship. What is AI writing, beloved reader, but a collective act of self-less authorship?

I will now let you ponder that question.

27iii26

In defense of social friction Science

Human well-being depends on the ability to navigate the social world, a skill acquired primarily through interactions with others. Such social learning depends on reliable feedback: recognizing when we are mistaken, when harm has been caused, and when others' perspectives warrant consideration. At times, sincere empathy appears where it was not expected, revealing that another person may be trusted in the future. At other times, disappointment leads to reconsideration of whether trust should be reduced or another chance offered. Acts of kindness may be met with gratitude; on other occasions, a misstep prompts a friend's disapproval and recognition that an apology is needed. In psychotherapy, moments of rupture—natural breakdowns in understanding followed by repair—are considered crucial for deepening trust, and for personal growth to unfold (2). Social life is rarely frictionless, because people are not perfectly attuned to one another. Yet it is precisely through such social friction that relationships deepen and moral understanding develops (3, 4).

Sycophancy is the opposite of this friction. Sycophantic behavior refers to excessive agreement, affirmation, or flattery that aligns with a person's expressed views or actions, irrespective of their broader social or moral implications. AI sycophancy has surfaced as a prominent issue in media reports and in industry discussions. Most notably, the research and development company OpenAI acknowledged that a version of GPT-4o (an AI-powered chatbot designed to simulate conversation with human users) had become overly affirming following an update, prompting a rapid rollback after users raised concerns about distorted feedback. The episode did not eliminate the broader phenomenon; it merely highlighted how readily sycophancy can emerge in systems optimized for user approval—that is, the computer models are tuned to generate responses that humans rate highly, such as being polite and agreeable, sometimes at the expense of accuracy.

28iii26

AI <--> Social Media? Mark Liberman at Language Log

As the use of AI chatbots takes off, it's worth pausing to ask which of these categories they fall into. There is good reason to believe it is the latter.

20iii26

Software, in a Time of Fear O'Reilly..."Nearly every family has them — boxes of aging photographs tucked away in closets, old albums passed down through generations, or stacks of prints slowly fading over time. These images carry personal history, but dust, scratches, wrinkles, fading color, and grain often make them difficult to view or share. Restore AI was designed to help recover and preserve those photographs," ON1 says in a press release.

There's a new hot term making the rounds that perfectly captures the spirit of the age: AI;DR, which stands for "AI; didn't read," a mutation of the venerable internet shorthand TL;DR ("too long; didn't read"). The semicolon, which in the original separated cause from effect—the more you write, the less I read—now separates the machine's output from your refusal to dignify it with your attention; quite an appropriate change given that we don't have any left.

As artificial intelligence (AI) systems become increasingly embedded in society, they are beginning to shape not only what people know, but how individuals evaluate themselves and others. On page 1348 of this issue, Cheng et al. (1) show that large language models systematically exhibit social sycophancy—affirming users' moral and interpersonal positions even when those stances are widely judged as harmful or unethical. The findings raise a broader concern: When AI systems are optimized to please, they may erode the very social friction through which accountability, perspective-taking, and moral growth ordinarily unfold.

(quoting John Burn-Murdock at Financial Times

Every media revolution has transformed who distributes information, what messages are distributed and what form they take. As such, some media are fundamentally democratising and polarising, widening the pool of publishers and views beyond a narrow elite and amplifying radical and anti-establishment voices. TikTok and the printing press arrived almost 600 years apart but share these characteristics. Others push the opposite way: radio and television had high barriers to entry, creating a monopoly for the voices and views of elites and experts.